8 Spatial Computing Concepts Moving Beyond Headset Hardware

Spatial computing has long been synonymous with bulky headsets and immersive virtual reality experiences, but the field is rapidly evolving beyond these traditional hardware constraints. As we stand at the precipice of a new technological era, spatial computing is transcending the limitations of head-mounted displays to embrace a more integrated, ambient approach to digital-physical interaction. This paradigm shift represents a fundamental reimagining of how we conceptualize and interact with digital information in three-dimensional space. Rather than requiring users to don specialized equipment, emerging spatial computing concepts are embedding intelligence directly into our environments, creating seamless bridges between the physical and digital worlds. From gesture recognition systems that operate without cameras to neural interfaces that bypass visual displays entirely, these innovations are democratizing access to spatial computing while simultaneously expanding its potential applications. The following exploration delves into eight groundbreaking concepts that are reshaping spatial computing's landscape, moving us toward a future where digital interaction becomes as natural and ubiquitous as breathing, integrated so seamlessly into our daily lives that the technology itself becomes invisible.

1. Ambient Spatial Intelligence - The Invisible Computing Layer

Ambient spatial intelligence represents a revolutionary approach where computing capabilities are embedded directly into the fabric of our physical environments, eliminating the need for personal devices or wearable hardware. This concept leverages distributed sensor networks, edge computing, and advanced AI algorithms to create spaces that can understand, interpret, and respond to human presence and behavior in real-time. Unlike traditional spatial computing that requires users to wear headsets or carry devices, ambient systems utilize ceiling-mounted cameras, floor-embedded pressure sensors, wall-integrated displays, and atmospheric computing elements to create a comprehensive understanding of spatial dynamics. These systems can track multiple users simultaneously, understand their intentions through gesture and movement patterns, and provide contextual information through environmental displays, audio cues, or haptic feedback integrated into furniture and surfaces. The technology extends beyond simple motion detection to include sophisticated behavioral analysis, emotional state recognition, and predictive modeling that anticipates user needs before they're explicitly expressed. Research institutions and tech companies are developing prototype environments where rooms themselves become the interface, capable of transforming their physical properties, lighting, temperature, and acoustic characteristics in response to occupant needs and activities, creating truly responsive architectural spaces that blur the line between built environment and digital interface.

2. Neural Interface Integration - Direct Brain-Computer Spatial Interaction

Neural interface technology is pioneering a new frontier in spatial computing by establishing direct communication pathways between the human brain and digital spatial environments, completely bypassing traditional visual and tactile interfaces. Advanced brain-computer interfaces (BCIs) are being developed that can decode spatial intentions, allowing users to navigate, manipulate, and interact with three-dimensional digital spaces through thought alone. These systems utilize high-resolution electroencephalography (EEG), functional near-infrared spectroscopy (fNIRS), and emerging implantable neural mesh technologies to capture and interpret neural signals associated with spatial cognition, movement intention, and object manipulation. The technology goes beyond simple command recognition to enable complex spatial reasoning, allowing users to mentally construct, modify, and navigate virtual environments with the same intuitive ease as imagining physical spaces. Researchers are developing neural protocols that can translate spatial memory patterns into digital reconstructions, enabling users to recreate and share mental maps of physical locations or entirely imagined spaces. This approach is particularly revolutionary for individuals with mobility limitations, offering unprecedented access to spatial computing experiences without requiring physical movement or traditional input devices. The integration of machine learning algorithms with neural interfaces is creating adaptive systems that learn individual users' spatial thinking patterns, becoming more responsive and accurate over time while maintaining the privacy and security of neural data through advanced encryption and local processing techniques.

3. Haptic Fabric Networks - Touch-Based Spatial Computing

Haptic fabric networks represent an innovative approach to spatial computing that transforms textiles and soft materials into interactive surfaces capable of providing rich tactile feedback and spatial information. These systems integrate microscopic actuators, sensors, and conductive fibers directly into fabric structures, creating clothing, furniture upholstery, and architectural elements that can simulate texture, temperature, pressure, and even complex spatial geometries through touch. Advanced haptic fabrics utilize shape-memory alloys, piezoelectric materials, and pneumatic micro-chambers to generate precise tactile sensations that can represent virtual objects, spatial boundaries, and environmental conditions. Users can feel the texture of virtual surfaces, experience the resistance of digital objects, and receive spatial navigation cues through clothing that responds to their position and orientation in both physical and virtual spaces. The technology extends beyond simple vibration patterns to include sophisticated haptic rendering that can simulate material properties like roughness, elasticity, and thermal characteristics, enabling users to distinguish between different virtual materials and objects through touch alone. Research teams are developing full-body haptic suits that provide comprehensive spatial feedback, allowing users to feel virtual rain, wind, or the presence of other users in shared digital spaces. These fabric-based systems are particularly valuable for accessibility applications, providing spatial information to visually impaired users through rich tactile feedback, and for professional training scenarios where haptic feedback enhances learning and skill development in fields ranging from medical procedures to industrial maintenance.

4. Acoustic Spatial Mapping - Sound-Based Environmental Understanding

Acoustic spatial mapping leverages advanced audio processing and machine learning algorithms to create detailed three-dimensional maps of environments using only sound, opening new possibilities for spatial computing that operates independently of visual systems. This technology employs sophisticated echolocation principles, similar to those used by bats and dolphins, combined with ambient sound analysis to understand spatial relationships, object locations, and environmental characteristics. Advanced microphone arrays and directional audio sensors capture subtle acoustic reflections, reverberation patterns, and sound propagation characteristics that reveal the shape, size, and material properties of surrounding spaces and objects. Machine learning algorithms process these acoustic signatures to generate real-time spatial maps that can identify room dimensions, furniture placement, surface materials, and even detect human movement and gestures through sound analysis. The system can distinguish between different types of surfaces based on their acoustic properties, recognize the presence and location of people through breathing patterns and micro-movements, and track object interactions through contact sounds and vibrations. This approach is particularly valuable for creating spatial computing experiences in environments where visual systems are impractical or unavailable, such as in complete darkness, underwater environments, or situations where privacy concerns prohibit camera-based tracking. Researchers are developing applications that combine acoustic mapping with spatial audio rendering to create immersive experiences where users can navigate and interact with digital content using only sound-based interfaces, making spatial computing accessible to visually impaired users while providing new interaction modalities for all users.

5. Electromagnetic Field Manipulation - Invisible Spatial Interfaces

Electromagnetic field manipulation represents a cutting-edge approach to spatial computing that creates invisible, three-dimensional interaction zones using controlled electromagnetic fields, enabling users to interact with digital content through hand and body movements without any physical contact or visible interface elements. This technology utilizes arrays of electromagnetic field generators, typically operating in radio frequency ranges, to create precise spatial zones that can detect and track conductive objects like human hands and bodies with millimeter-level accuracy. Advanced signal processing algorithms analyze the disturbances in electromagnetic fields caused by user movements, translating these perturbations into spatial coordinates and gesture recognition data. The system can create multiple independent interaction volumes simultaneously, allowing for complex multi-user scenarios and sophisticated gesture vocabularies that extend far beyond simple pointing and clicking. Unlike camera-based tracking systems, electromagnetic field manipulation works in complete darkness, is unaffected by visual occlusion, and can penetrate certain materials, making it ideal for integration into vehicles, industrial equipment, and architectural elements. The technology can also provide haptic feedback by modulating field strength to create sensations of resistance or attraction, giving users tactile confirmation of their interactions with invisible digital interfaces. Research applications include automotive interfaces where drivers can control navigation and entertainment systems through mid-air gestures without taking their eyes off the road, medical environments where sterile interaction is crucial, and industrial settings where workers need to access digital controls while wearing protective equipment that would interfere with traditional interfaces.

6. Distributed Projection Systems - Environmental Display Networks

Distributed projection systems are revolutionizing spatial computing by transforming entire environments into dynamic display surfaces, eliminating the need for traditional screens or personal viewing devices while creating immersive visual experiences that adapt to user position and context. These systems employ networks of miniaturized projectors, often no larger than smartphones, strategically positioned throughout spaces to create seamless visual overlays on walls, floors, ceilings, and even three-dimensional objects. Advanced computer vision algorithms continuously track user positions and viewing angles, dynamically adjusting projection content to maintain proper perspective and visual coherence as people move through the space. The technology utilizes sophisticated color correction and brightness adaptation to account for different surface materials and ambient lighting conditions, ensuring consistent visual quality across diverse projection surfaces. Multiple users can simultaneously experience personalized content, with the system calculating individual viewing perspectives and rendering appropriate visual information for each person's position and orientation. The projectors can work in concert to create large-scale immersive environments or operate independently to provide localized information displays that follow users as they move through spaces. Recent developments include ultra-short-throw projectors that can be embedded in furniture and architectural elements, creating surfaces that can instantly transform from mundane objects into interactive displays. These systems are particularly valuable for educational environments where entire classrooms can become immersive learning spaces, retail environments where products can be enhanced with dynamic information overlays, and collaborative workspaces where teams can interact with shared digital content projected onto any available surface.

7. Biometric Spatial Tracking - Body-Centric Computing

Biometric spatial tracking represents a sophisticated approach to spatial computing that uses the human body's natural biological signals and characteristics as both input devices and spatial reference points, creating personalized computing experiences that adapt to individual physiological states and spatial behaviors. This technology integrates multiple biometric sensors including heart rate monitors, galvanic skin response detectors, eye tracking cameras, and muscle tension sensors to create a comprehensive understanding of user state and spatial interaction patterns. Advanced machine learning algorithms analyze correlations between biometric data and spatial behaviors, learning to predict user intentions and preferences based on physiological responses to different spatial environments and digital content. The system can detect stress levels, attention states, fatigue, and emotional responses, automatically adjusting spatial computing experiences to optimize user comfort and effectiveness. Eye tracking components provide precise spatial reference points, allowing the system to understand exactly where users are looking and focusing their attention within three-dimensional spaces. Muscle tension sensors can detect micro-movements and gesture intentions before they become visible actions, enabling predictive interfaces that respond to user intentions with minimal latency. The technology extends to include gait analysis, posture monitoring, and breathing pattern recognition, creating spatial profiles that can identify individuals and adapt environments to their specific needs and preferences. Privacy protection is paramount in these systems, with advanced encryption and local processing ensuring that sensitive biometric data remains secure while still enabling personalized spatial computing experiences that evolve and improve over time based on individual usage patterns and physiological responses.

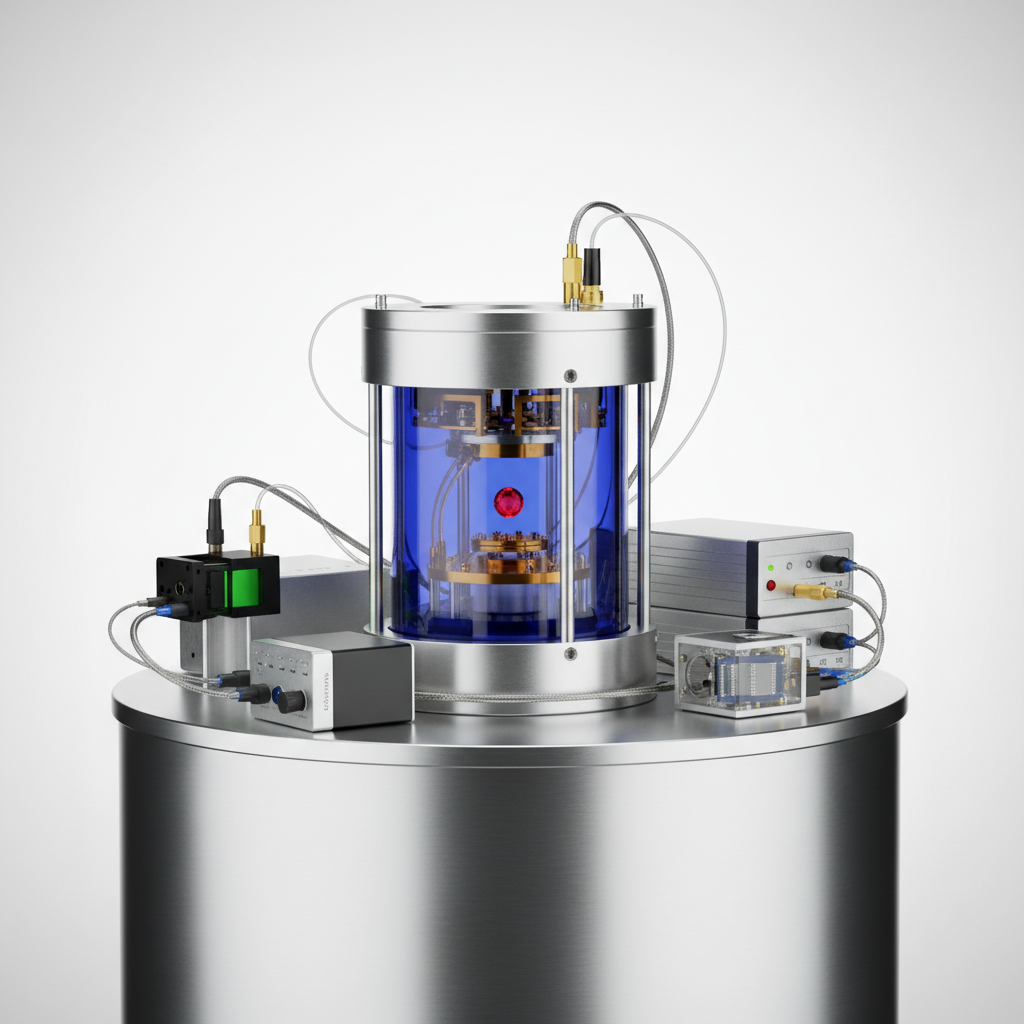

8. Quantum Sensing Networks - Ultra-Precise Spatial Detection

Quantum sensing networks represent the frontier of spatial computing precision, utilizing quantum mechanical properties to achieve unprecedented accuracy in spatial measurement and environmental detection, enabling new forms of interaction that operate at scales and sensitivities previously impossible with classical sensors. These systems leverage quantum phenomena such as superposition, entanglement, and quantum interference to create sensors capable of detecting minute changes in gravitational fields, magnetic variations, and atomic-level movements within spatial environments. Quantum accelerometers and gyroscopes can track position and orientation with accuracy measured in fractions of millimeters and micro-degrees, enabling spatial computing applications that require extreme precision such as surgical guidance, precision manufacturing, and scientific instrumentation. The technology extends beyond traditional spatial tracking to include quantum-enhanced environmental sensing that can detect chemical compositions, material stress patterns, and even biological processes through quantum field interactions. Networks of quantum sensors can be distributed throughout environments to create comprehensive spatial awareness systems that understand not just the position and movement of objects, but their fundamental physical properties and states. These sensors can operate in conditions where traditional sensors fail, including extreme temperatures, high radiation environments, and situations requiring complete electromagnetic silence. The quantum advantage becomes particularly apparent in multi-user scenarios where quantum entanglement enables instantaneous correlation between sensors, creating spatial computing systems that can track complex interactions between multiple users and objects with perfect synchronization. Research applications include quantum-enhanced medical imaging that can track cellular-level changes in real-time, architectural monitoring systems that can detect structural stress before it becomes visible, and environmental monitoring networks that can identify pollution sources and track their spatial distribution with unprecedented accuracy.

9. Molecular Computing Interfaces - Chemical-Based Spatial Interaction

Molecular computing interfaces represent perhaps the most futuristic approach to spatial computing, utilizing chemical reactions, molecular recognition, and biological processes to create spatial interaction systems that operate at the molecular and cellular level. This emerging field combines synthetic biology, nanotechnology, and chemical engineering to develop computing systems that can interface directly with biological processes and chemical environments. These systems utilize engineered proteins, DNA computing elements, and synthetic molecular machines to detect and respond to chemical gradients, molecular concentrations, and biological signals within three-dimensional spaces. The technology can create spatial maps based on chemical composition, tracking the distribution and movement of specific molecules or biological markers throughout environments. Advanced applications include biocompatible sensors that can be integrated into living tissue to provide spatial information about biological processes, environmental monitoring systems that can detect and track chemical pollutants at the molecular level, and medical diagnostic tools that can map the spatial distribution of disease markers within the human body. The molecular approach enables spatial computing in environments where traditional electronic systems cannot operate, such as inside living cells, in corrosive chemical environments, or in situations requiring complete biocompatibility. Researchers are developing molecular robots that can navigate three-dimensional spaces at the cellular level, carrying out programmed tasks and reporting spatial information about their microscopic environments. These systems can potentially interface with the human nervous system at the cellular level, creating direct biological connections to spatial computing networks that could enable new forms of human-computer interaction. The technology also opens possibilities for self-assembling spatial computing systems that can grow and adapt their structure based on environmental conditions, creating dynamic networks that can reconfigure themselves to optimize spatial sensing and interaction capabilities based on changing requirements and conditions.