8 Quantum Computing Milestones That Changed What We Thought Was Possible

Quantum computing represents one of humanity's most audacious technological leaps, fundamentally challenging our understanding of computation, physics, and the very nature of information processing. Unlike classical computers that process information in binary bits of 0s and 1s, quantum computers harness the bizarre principles of quantum mechanics—superposition, entanglement, and quantum interference—to manipulate quantum bits or "qubits" that can exist in multiple states simultaneously. This revolutionary approach to computation has transformed from theoretical speculation in the 1980s to tangible reality, with each breakthrough milestone reshaping our perception of what's computationally possible. The journey from Richard Feynman's visionary 1981 proposal to simulate quantum systems using quantum computers to today's sophisticated quantum processors has been marked by extraordinary achievements that have consistently exceeded expectations and opened new frontiers in cryptography, optimization, drug discovery, and artificial intelligence. These pivotal moments haven't just advanced technology; they've fundamentally altered our understanding of computational limits and revealed pathways to solving problems once deemed intractable by classical means.

1. Shor's Algorithm (1994) - The Cryptographic Game Changer

Peter Shor's groundbreaking algorithm in 1994 sent shockwaves through the cybersecurity world by demonstrating that quantum computers could efficiently factor large integers—the mathematical foundation underlying RSA encryption that secures most of our digital communications. This theoretical breakthrough revealed that quantum computers could potentially break the cryptographic systems protecting everything from online banking to government communications, fundamentally challenging the assumption that certain mathematical problems were computationally intractable. Shor's algorithm exploits quantum superposition and the quantum Fourier transform to find the period of a function exponentially faster than any known classical algorithm, reducing the time complexity from exponential to polynomial time. The implications were staggering: while a classical computer would require billions of years to factor a 2048-bit number, a sufficiently powerful quantum computer could accomplish this in hours or days. This milestone didn't just represent a theoretical curiosity—it sparked a global race to develop quantum-resistant cryptography and accelerated investment in quantum computing research. The algorithm's elegance lies in its ability to transform a seemingly impossible classical problem into a manageable quantum computation, proving that quantum computers weren't just faster versions of classical machines but fundamentally different computational paradigms capable of solving previously unsolvable problems.

2. First Quantum Error Correction (1995-1996) - Taming Quantum Fragility

The development of quantum error correction codes by Peter Shor and Andrew Steane in 1995-1996 addressed one of quantum computing's most formidable challenges: the extreme fragility of quantum information. Quantum states are notoriously delicate, susceptible to decoherence from environmental interference, making reliable quantum computation seem nearly impossible. These pioneering error correction schemes demonstrated that it was theoretically possible to protect quantum information from errors without directly measuring the quantum states—a seemingly paradoxical achievement given that quantum measurement typically destroys superposition. The Shor code and Steane code showed how to encode a single logical qubit across multiple physical qubits, enabling error detection and correction while preserving quantum properties. This breakthrough was revolutionary because it proved that quantum computers could, in principle, perform arbitrarily long computations with arbitrarily high accuracy, provided sufficient physical qubits and low enough error rates. The milestone established the theoretical foundation for fault-tolerant quantum computing, showing that quantum computers weren't destined to be forever limited by decoherence. Instead, it revealed a pathway to scalable quantum computation through redundancy and sophisticated error correction protocols, fundamentally changing the perception of quantum computing from a fragile curiosity to a potentially robust computational platform capable of sustained operation.

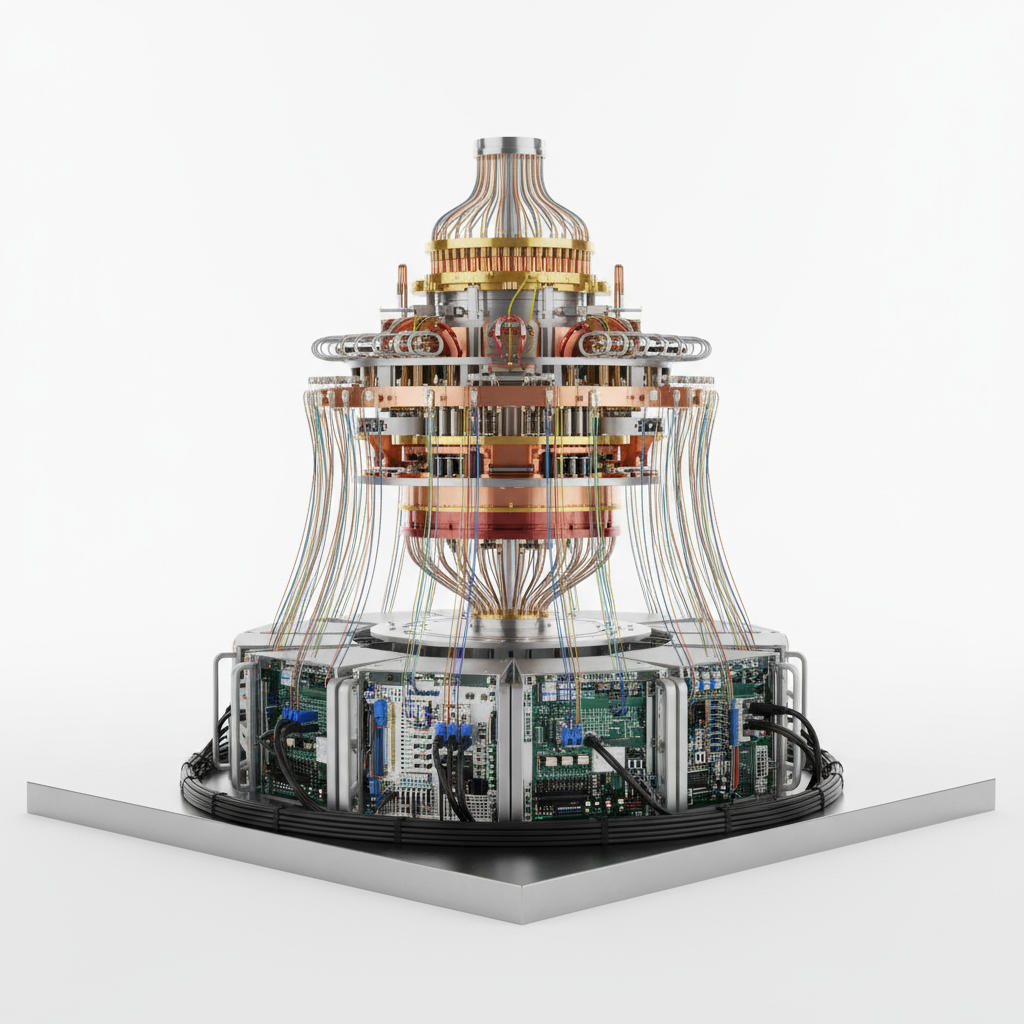

3. IBM's 5-Qubit Quantum Computer on the Cloud (2016) - Democratizing Quantum Access

IBM's revolutionary decision to make quantum computing accessible through the cloud in 2016 marked a paradigm shift from exclusive laboratory research to global democratization of quantum technology. The IBM Quantum Experience platform allowed researchers, students, and curious individuals worldwide to run actual quantum algorithms on real quantum hardware for the first time, breaking down the barriers that had confined quantum computing to elite research institutions. This 5-qubit superconducting quantum processor, while modest in scale, represented a monumental leap in accessibility and educational impact. Users could design quantum circuits using a intuitive drag-and-drop interface, execute them on genuine quantum hardware, and observe real quantum phenomena including superposition, entanglement, and quantum interference. The platform's impact extended far beyond its technical specifications—it catalyzed a global quantum education movement, inspired countless researchers to enter the field, and demonstrated that quantum computers could operate reliably enough for public access. Within months, thousands of users from over 100 countries were conducting quantum experiments, creating an unprecedented global laboratory for quantum research. This milestone fundamentally changed the quantum computing landscape by transforming it from an esoteric field accessible only to a few dozen researchers into a vibrant, globally accessible platform that sparked innovation, education, and collaboration on an unprecedented scale.

4. Google's Quantum Supremacy Achievement (2019) - The Computational Milestone

Google's announcement of quantum supremacy in October 2019 represented a historic inflection point where quantum computers demonstrably outperformed classical computers on a specific computational task for the first time. Using their 53-qubit Sycamore processor, Google's team performed a specialized sampling problem in 200 seconds that would have required the world's most powerful supercomputers approximately 10,000 years to complete using classical algorithms. This milestone, while controversial in its practical implications, unequivocally demonstrated that quantum computers had crossed a fundamental threshold in computational capability. The achievement involved generating random quantum circuits and sampling their outputs—a task specifically designed to showcase quantum computational advantages while being extremely difficult for classical computers to simulate. Critics argued that the chosen problem lacked practical applications and that improved classical algorithms could reduce the computational gap, but the fundamental significance remained undeniable: a quantum computer had achieved computational superiority in its native domain. This breakthrough validated decades of theoretical predictions and engineering efforts, proving that quantum computers weren't just theoretical constructs but genuine computational tools capable of unprecedented performance. The achievement catalyzed massive investment in quantum technologies, accelerated research timelines, and fundamentally shifted the conversation from "if" quantum computers would surpass classical computers to "when" and "for which applications."

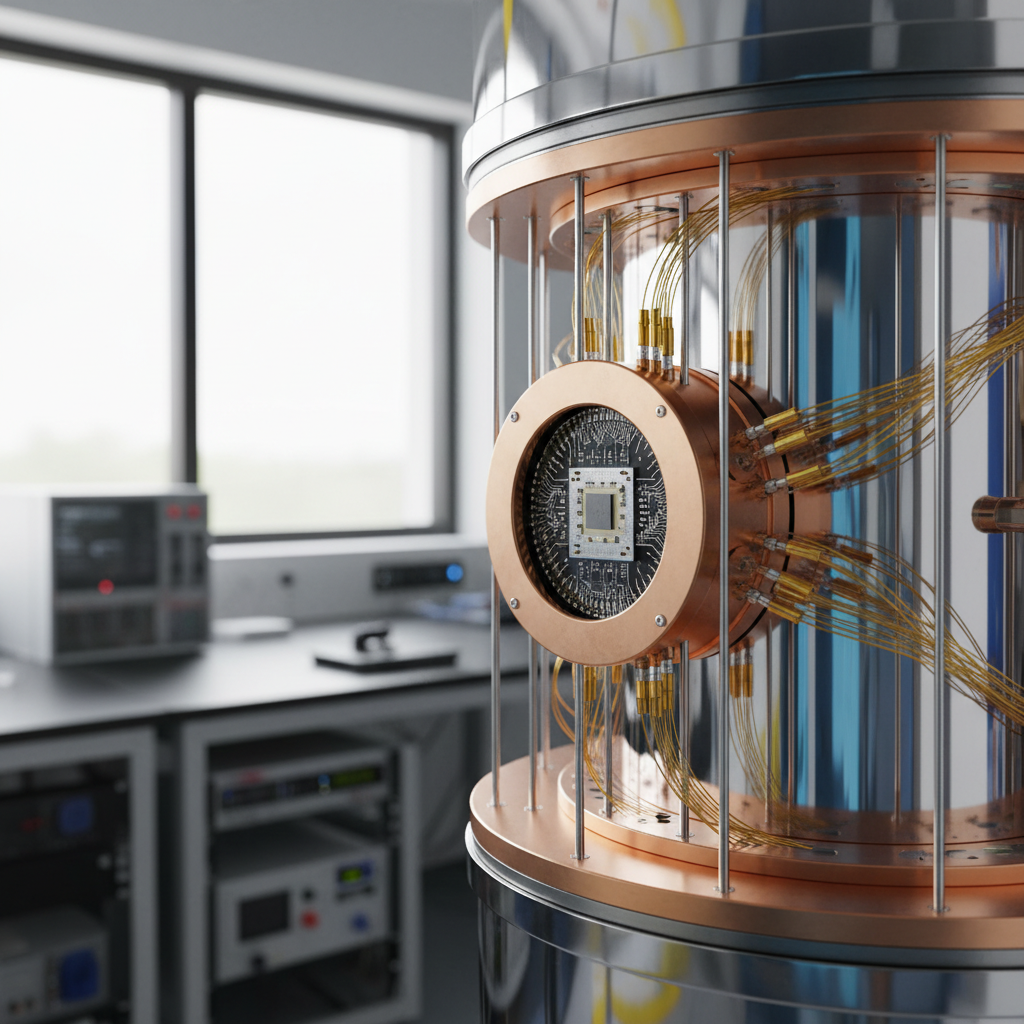

5. IonQ's Trapped Ion Breakthrough (2020) - Precision and Scalability

IonQ's development of high-fidelity trapped ion quantum computers in 2020 demonstrated that quantum computing could achieve remarkable precision and scalability through alternative technological approaches. Unlike superconducting qubits that require extreme cooling, trapped ion systems use electromagnetic fields to confine individual ions in vacuum chambers, manipulating their quantum states with precisely controlled laser pulses. IonQ's breakthrough achieved gate fidelities exceeding 99.5% and demonstrated full connectivity between qubits—meaning any qubit could interact directly with any other qubit without requiring complex routing through intermediate qubits. This architectural advantage enabled more efficient quantum algorithms and reduced the overhead associated with quantum error correction. The company's systems achieved impressive algorithmic quantum volume metrics, indicating superior performance on practical quantum computing benchmarks rather than specialized tasks. Their trapped ion approach proved that quantum computers could maintain coherence and precision while scaling to larger numbers of qubits, addressing critical concerns about the viability of different quantum computing technologies. The breakthrough demonstrated that multiple technological pathways could lead to practical quantum computing, each with unique advantages for different applications. IonQ's success validated the trapped ion approach as a serious contender for fault-tolerant quantum computing and showed that the quantum computing landscape would likely be diverse, with different technologies optimized for different computational tasks and operational requirements.

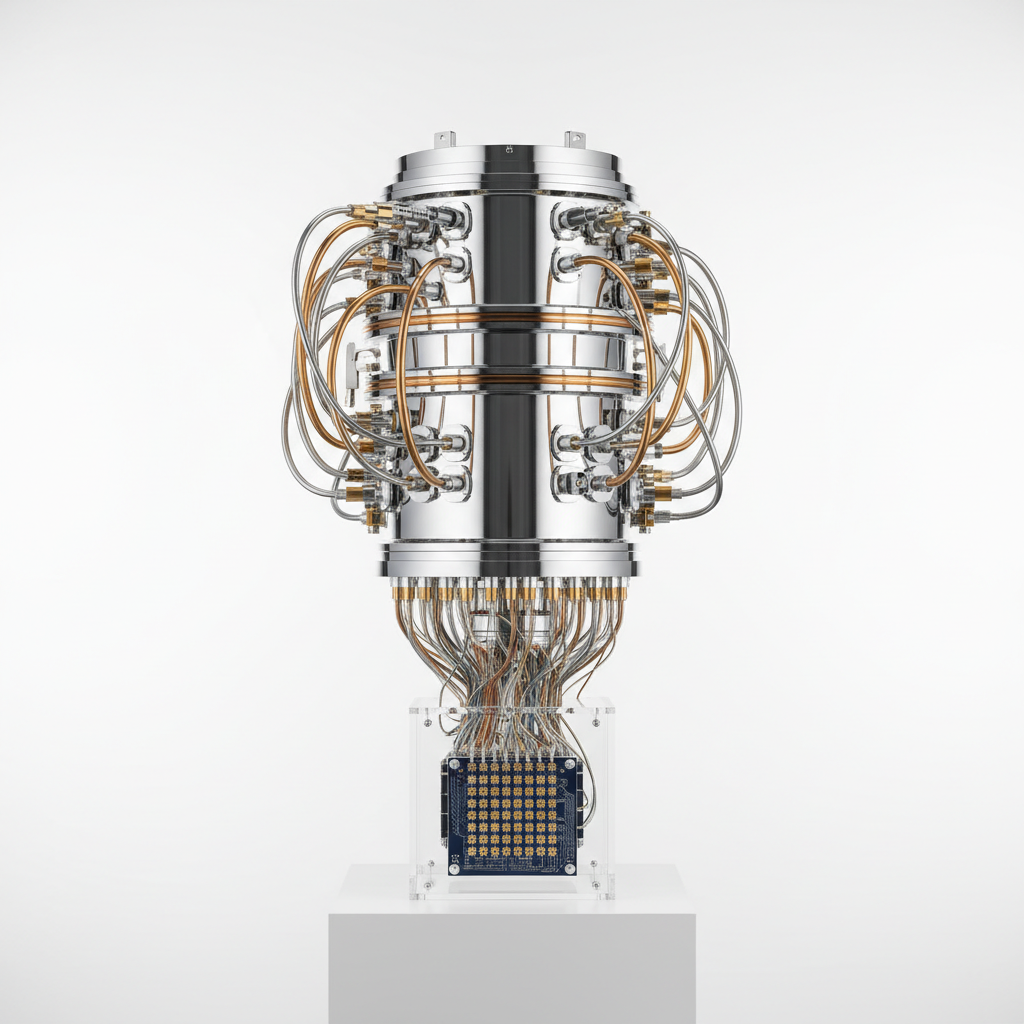

6. IBM's 127-Qubit Eagle Processor (2021) - Scaling Beyond Classical Simulation

IBM's unveiling of the 127-qubit Eagle processor in November 2021 marked the first time a quantum computer exceeded the practical simulation limits of classical computers for general quantum circuits. This milestone represented a fundamental scaling breakthrough, as classical computers can no longer efficiently simulate the full quantum state space of systems with more than approximately 50-60 qubits due to exponential memory requirements. The Eagle processor's 127 qubits created a quantum state space containing 2^127 possible configurations—a number larger than the estimated number of atoms in the universe. This achievement wasn't just about raw qubit count; IBM simultaneously improved qubit quality, connectivity, and control systems to create a genuinely useful quantum computing platform. The processor incorporated advanced error mitigation techniques, improved qubit fabrication processes, and sophisticated control electronics to maintain quantum coherence across the entire system. Eagle's architecture featured a heavy-hexagonal qubit layout optimized for quantum error correction and efficient quantum algorithm execution. This milestone demonstrated that quantum computers were transitioning from proof-of-concept devices to potentially practical computational tools capable of exploring quantum phenomena beyond classical reach. The achievement validated IBM's roadmap toward fault-tolerant quantum computing and showed that systematic engineering improvements could overcome the scaling challenges that had limited earlier quantum processors. Eagle proved that quantum computers were entering a new era where their computational capabilities genuinely exceeded classical alternatives for an expanding range of problems.

7. Quantum Machine Learning Breakthroughs (2021-2022) - AI Meets Quantum

The convergence of quantum computing and machine learning in 2021-2022 opened entirely new computational paradigms that promised to revolutionize artificial intelligence and data analysis. Researchers demonstrated that quantum computers could potentially provide exponential speedups for certain machine learning tasks, including pattern recognition, optimization, and data classification problems that form the backbone of modern AI systems. Quantum machine learning algorithms like the Quantum Approximate Optimization Algorithm (QAOA) and Variational Quantum Eigensolvers (VQE) showed remarkable promise for solving complex optimization problems that classical computers struggle with. These hybrid quantum-classical algorithms leverage quantum superposition and entanglement to explore vast solution spaces simultaneously, potentially finding optimal solutions exponentially faster than classical approaches. Breakthrough demonstrations included quantum neural networks that could learn complex patterns with fewer training examples, quantum algorithms for drug discovery that could model molecular interactions with unprecedented accuracy, and quantum optimization routines for financial portfolio management and logistics planning. The milestone wasn't just theoretical—companies like IBM, Google, and startups like Xanadu began demonstrating practical quantum machine learning applications on real quantum hardware. These achievements suggested that quantum computers might not just complement classical AI but fundamentally transform how we approach machine learning, enabling new types of artificial intelligence that could process information in ways impossible for classical systems. The quantum-AI convergence represented a multiplication of computational possibilities, where quantum mechanics' strange properties could enhance artificial intelligence's pattern recognition and optimization capabilities.

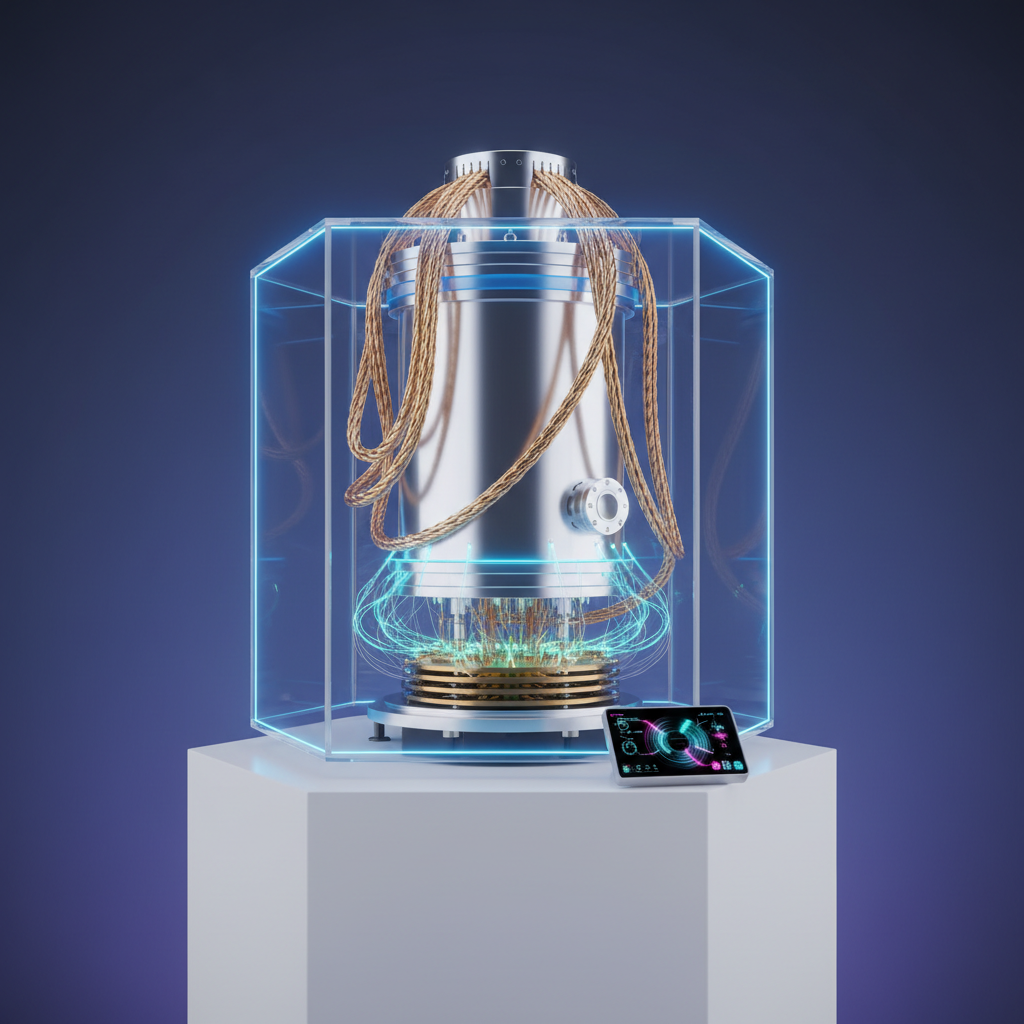

8. Atom Computing's 1000+ Qubit System (2023) - The Thousand-Qubit Threshold

Atom Computing's achievement of creating a quantum computer with over 1000 qubits in 2023 shattered previous scaling records and demonstrated that quantum systems could reach unprecedented sizes while maintaining operational coherence. Using neutral atom technology, where individual atoms are trapped and manipulated using optical tweezers and laser cooling, the company created quantum systems with dramatically more qubits than any previous quantum computer. This breakthrough wasn't merely about quantity—it represented a fundamental advance in quantum system architecture, control, and scalability. The neutral atom approach allows for flexible qubit arrangements, where atoms can be moved and reconfigured during computation, enabling dynamic quantum circuit architectures impossible with fixed superconducting or trapped ion systems. The 1000+ qubit milestone demonstrated that quantum computers could scale beyond the realm of classical simulation for virtually any quantum algorithm, not just specialized cases. The achievement required breakthrough innovations in laser control systems, atom trapping technologies, and quantum error mitigation techniques to maintain coherence across such a large quantum system. This milestone proved that multiple technological approaches could achieve large-scale quantum computing, validating the diversity of quantum computing platforms and suggesting that different technologies might be optimal for different applications. The thousand-qubit threshold represented a psychological and practical barrier, showing that quantum computers were rapidly approaching the scales necessary for fault-tolerant quantum computing and practical quantum advantage across a broad range of applications.

9. The Future Implications - Quantum Computing's Transformative Potential

These eight quantum computing milestones collectively demonstrate that we've entered an era where quantum computers are transitioning from scientific curiosities to transformative computational tools that will reshape multiple industries and scientific disciplines. The progression from Shor's theoretical algorithm to thousand-qubit systems reveals an accelerating trajectory toward practical quantum advantage across diverse applications including cryptography, drug discovery, financial modeling, artificial intelligence, and materials science. Each milestone has not only advanced the technology but fundamentally expanded our conception of computational possibilities, revealing that problems once considered intractable may become routine quantum computations. The implications extend far beyond faster computers—quantum computing promises to enable new types of simulations that could accelerate scientific discovery, optimization algorithms that could revolutionize logistics and resource allocation, and machine learning approaches that could create more powerful artificial intelligence systems. As these technologies mature, they will likely catalyze breakthrough discoveries in quantum chemistry, enabling the design of new materials and drugs; revolutionize cryptography, requiring entirely new security paradigms; and enhance artificial intelligence in ways we're only beginning to understand. The quantum computing revolution isn't just changing what we can compute—it's transforming how we think about computation itself, revealing that the universe's quantum mechanical nature provides computational resources far beyond what classical physics suggested was possible. These milestones represent humanity's growing mastery over quantum phenomena and our ability to harness the fundamental properties of reality for computational purposes, opening pathways to discoveries and capabilities that will define the next century of technological progress.